Software makes more ethically differentiated decisions

Autonomous driving: New algorithm distributes risk fairly

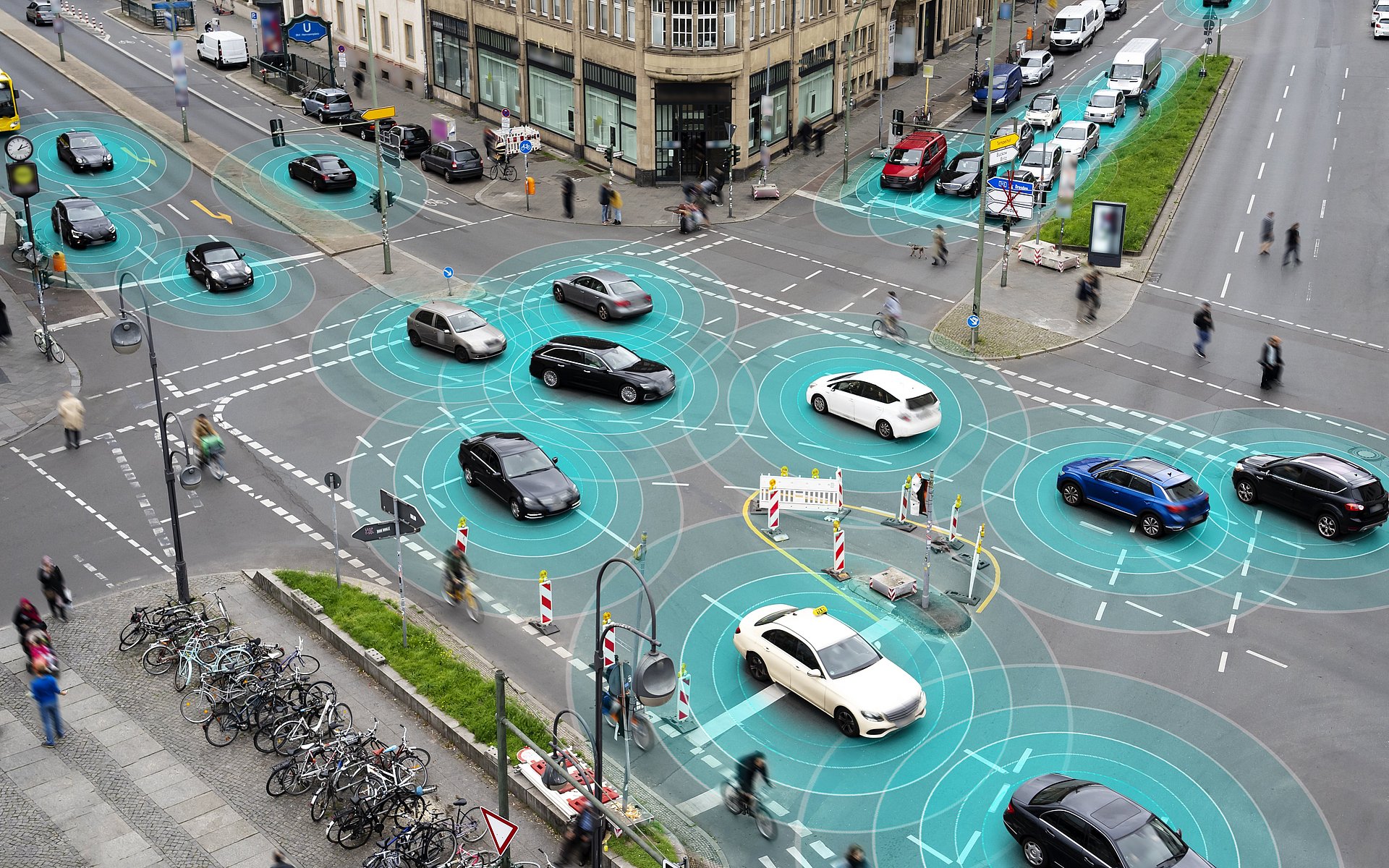

Technical realization is not the only obstacle to be mastered before autonomously driving vehicles can be allowed on the street on a large scale. Ethical questions play an important role in the development of the corresponding algorithms: Software has to be able to handle unforeseeable situations and make the necessary decisions in case of an impending accident. Researchers at TUM have now developed the first ethical algorithm to fairly distribute the levels of risk rather than operating on an either/or principle. Approximately 2,000 scenarios involving critical situations were tested, distributed across various types of streets and regions such as Europe, the USA and China. The research work published in the journal "Nature Machine Intelligence" is the joint result of a partnership between the Chair of Automotive Technology and the Chair of Business Ethics at TUM’s Institute for Ethics in Artificial Intelligence (IEAI).

Maximilian Geisslinger, a scientist at the TUM Chair of Automotive Technology, explains the approach: "Until now, autonomous vehicles were always faced with an either/or choice when encountering an ethical decision. But street traffic can't necessarily be divided into clear-cut, black and white situations; much more, the countless gray shades in between have to be considered as well. Our algorithm weighs various risks and makes an ethical choice from among thousands of possible behaviors – and does so in a matter of only a fraction of a second."

More options in critical situations

The basic ethical parameters on which the software's risk evaluation is oriented were defined by an expert panel as a written recommendation on behalf of the EU Commission in 2020. The recommendation includes basic principles such as priority for the worst-off and the fair distribution of risk among all road users. In order to translate these rules into mathematical calculations, the research team classified vehicles and persons moving in street traffic based on the risk they present to others and on the respective willingness to take risks. A truck for example can cause serious damage to other traffic participants, while in many scenarios the truck itself will only experience minor damage. The opposite is the case for a bicycle. In the next step the algorithm was told not to exceed a maximum acceptable risk in the various respective street situations. In addition, the research team added variables to the calculation which account for responsibility on the part of the traffic participants, for example the responsibility to obey traffic regulations.

Previous approaches treated critical situations on the street with only a small number of possible maneuvers; in unclear cases the vehicle simply stopped. The risk assessment now integrated in the researchers' code results in more possible degrees of freedom with less risk for all. An example will illustrate the approach: An autonomous vehicle wants to overtake a bicycle, while a truck is approaching in the oncoming lane. All the existing data on the surroundings and the individual participants are now utilized. Can the bicycle be overtaken without driving in the oncoming traffic lane and at the same time maintaining a safe distance to the bicycle? What is the risk posed to each respective vehicle, and what risk do these vehicles constitute to the autonomous vehicle itself? In unclear cases the autonomous vehicle with the new software always waits until the risk to all participants is acceptable. Aggressive maneuvers are avoided, while at the same time the autonomous vehicle doesn't simply freeze up and abruptly jam on the brakes. Yes and No are irrelevant, replaced by an evaluation containing a large number of options.

"Our framework puts the ethics of risk at the center"

"Until now, often traditional ethical theories were contemplated to derive morally permissible decisions made by autonomous vehicles. This ultimately led to a dead end, since in many traffic situations there was no other alternative than to violate one ethical principle," says Franziska Poszler, scientist at the TUM Chair of Business Ethics. "In contrast, our framework puts the ethics of risk at the center. This allows us to take into account probabilities to make more differentiated assessments."

The researchers emphasized the fact that even algorithms that are based on risk ethics – although they can make decisions based on the underlying ethical principles in every possible traffic situation - they still cannot guarantee accident-free street traffic. In the future it will additionally be necessary to consider further differentiations such as cultural differences in ethical decision-making.

Software now to be tested in street traffic

Until now the algorithm developed at TUM has been validated in simulations. In the future the software will be tested on the street using the research vehicle EDGAR. The code embodying the findings of the research activities is available as Open Source software. TUM is thus contributing to the development of viable and safe autonomous vehicles.

M. Geisslinger, F. Poszler, and M. Lienkamp, “An ethical trajectory planning algorithm for autonomous vehicles,” Nat Mach Intell, 2023. DOI: 10.1038/s42256-022-00607-z

Projekt „ANDRE – AutoNomous DRiving Ethics“

https://www.ieai.sot.tum.de/research/andre-autonomous-driving-ethics/

Technical University of Munich

Corporate Communications Center

- Andreas Huber

- huber.a@tum.de

- presse@tum.de

- Teamwebsite

Contacts to this article:

Franziska Poszler, M.Sc. | Maximilian Geißlinger. M.Sc. |